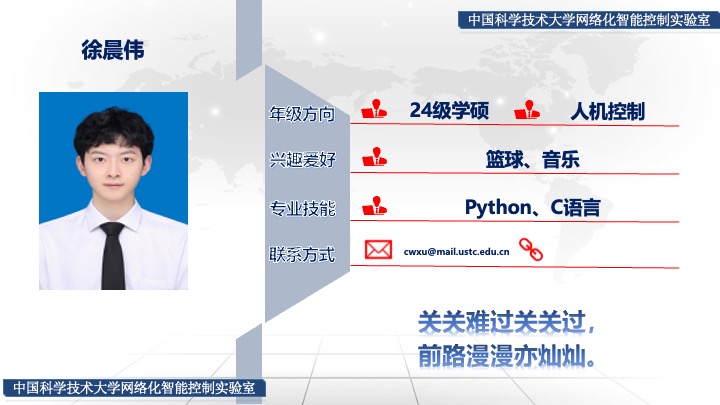

个人信息

参与实验室科研项目

基于强化学习的呼吸机参数智能精准控制技术研究

学术成果

共撰写/参与撰写专利 0 项,录用/发表论文 1 篇,投出待录用论文1篇。

Journal Articles

-

Human-in-the-Loop Reinforcement Learning with Risk-Aware Intervention and Imitation

Yaqing Zhou,

Yun-Bo Zhao ,

Chenwei Xu,

Chen Ouyang,

and Pengfei Li

Expert Syst. Appl.

2026

[Abs]

[doi]

[pdf]

Human-in-the-loop reinforcement learning (HiL-RL) improves policy safety and learning efficiency by incorporating real-time human interventions and demonstration data. However, existing HiL-RL methods often suffer from inaccurate intervention timing and inefficient use of demonstration data. To address these issues, we propose a novel framework called HiRIL (Human-in-the-loop Risk-aware Imitation-enhanced Learning), which establishes a closed-loop learning mechanism that integrates risk-aware intervention triggering and imitation-based policy optimization under a dual-mode uncertainty metric. At the core of HiRIL is the Bayesian Implicit Quantile Network (BIQN), which captures both epistemic and aleatoric uncertainty through Bayesian weight sampling and quantile-based return modeling. These uncertainties are combined to generate risk scores for state-action pairs, guiding when to trigger human intervention. To better utilize intervention data, HiRIL introduces a prioritized experience replay mechanism based on risk difference, which emphasizes human interventions that significantly reduce risk. During policy optimization, a local imitation loss is applied to clone human actions at intervention points, enabling risk-guided joint optimization. We conduct extensive experiments on the CARLA end-to-end autonomous driving benchmark. Results show that HiRIL consistently outperforms baselines across multiple metrics and maintains strong robustness under perturbations and non-stationary human intervention.